#Datapipeline: The T in ETL with SQL

by bernt & torsten

If you read this I assume you are familiar with Extract Transform and Load (ETL), this is just a short article to show how you can do ETL and especially T, the transform using a SQL statement.

A word on ETL

ETL is nothing new, it has been around for a while, the ETL process became a popular concept in the 1970s when computers were coming online and starting to generate digital data. Apart from storing data, the data needed a process to prepare the data for downstream applications. That is why ETL become an important concept.

When data warehouses emerged in the early ’90s a centralized location for data and its transformations emerged. These data warehouses were still limited in the formats they used and the operations you could run within them, so intermediary steps (transformations) were needed before loading the data in.

Since then, data warehouses and data stores in general, have added transformational abilities with SQL support, views, jobs and stored procedures.

A word on data structures

Let’s first take a look at data structures and why it is important to understand that there are three common types of data structures. The why: knowing your data structure makes your task easier to select the method and tool to do your extract, transformation and load. The view shows the most common data structures.

My Data Pipeline Architecture

The following views show the GCP architecture that I used for my Data Pipeline with SQL

The Application Data

I had built a pipeline that was using a Cloud Function to Extract data and store it in a Cloud Storage bucket, which I had used to build the Maritime noon report with Google Cloud Dataprep. I made one change to the old architecture in that I made a change to replace the local MySQL database with a Cloud SQL MySQL instance and point my application to the Cloud SQL MySQL database.

Structured Data

I am using data from a Cloud SQL MySQL database that adheres to a pre-defined data model conforming to a tabular format with a relationship between the different rows and columns.

The Query View

My task was to create a view in BigQuery from the Cloud SQL database, to do this I had to enable the BigQuery Connection API to be able to use Cloud SQL Federated Queries. For instructions visit the Google Documentation on Cloud SQL Federated Queries.

Below is the SQL I used in BigQuery to set up my view, by running this query I saved the result as a view, using the view allows for any updates to the Cloud SQL database tables that are used by the SQL statement to be automatically past on to the view.

The SQL statement below is not a complete SQL statement, should give you an idea of how you could go about using this type of data transformation on your own SQL database.

SELECT

CASE

WHEN ship=1 THEN "Shore"

WHEN ship=2 THEN "MYEZ"

WHEN ship=3 THEN "MYAS"

WHEN ship=4 THEN "MYRW"

ELSE "Shore"

END as ship,

local_time,

......

position_lat,

position_lon,

CONCAT(position_lat, ', ', position_lon) as position,

ports.name as port_of_call,

countries.country as countryid,

ship_activities.activity as ship_activity,

CONCAT(personaldata.names, ' ', personaldata.family_names) as report_by,

.................

FROM noon_report

LEFT JOIN ports ON noon_report.next_port=ports.id

LEFT JOIN countries ON noon_report.countryid=countries.id

LEFT JOIN ship_activities ON noon_report.ship_activity=ship_activities.id

LEFT JOIN personaldata ON noon_report.report_by=personaldata.id

SQL Transformation

In my SQL statement, I have a few transformations, for that, I used LEFT JOIN – to replace the values pointing to another table with the actual value.

I used CONCAT to use to fields to make a new field with the values from the two fields. The CONCAT for the new position fields is to create a new field that has latitude and longitude as xxxxx, xxxxx. This new field position is used for using a MAP in Data Studio, I could have done it in Data Studio I like to keep a transformation in one place, it just makes it simple.

The other contact was to use the first name and last name to create a field called report_by, this field is to know who filled in the noon report and submitted it.

As I mention my transformations were simple, I would not need a tool like Cloud Dataprep which I used in the first version of this data pipeline, it costs to use Cloud Dataprep for transformations, A SQL statement is free, and the end result of the transformation is the same.

Wrap Up

You may wonder why I did not do this the first time, the reason for that is that Cloud SQL MySQL and Cloud SQL Federated Queries were not available the first time I set up Maritime Noonreport. At the time when I build the first version of the data pipeline, I used the tools that were available to me at the time and it is always a good practice to review your data pipelines and make adjustments to performance, code updates and cost.

That is all folks, if this is useful information do not hesitate to donate to our efforts of bringing good readable content with instructions.

Tech Disillusionment

For four decades, I have worked in the tech industry. I started in the 1980s when computing...

A Poem: The Consultant's Message

On a Friday, cold and gray,

The message came, sharp as steel,

Not from those we...

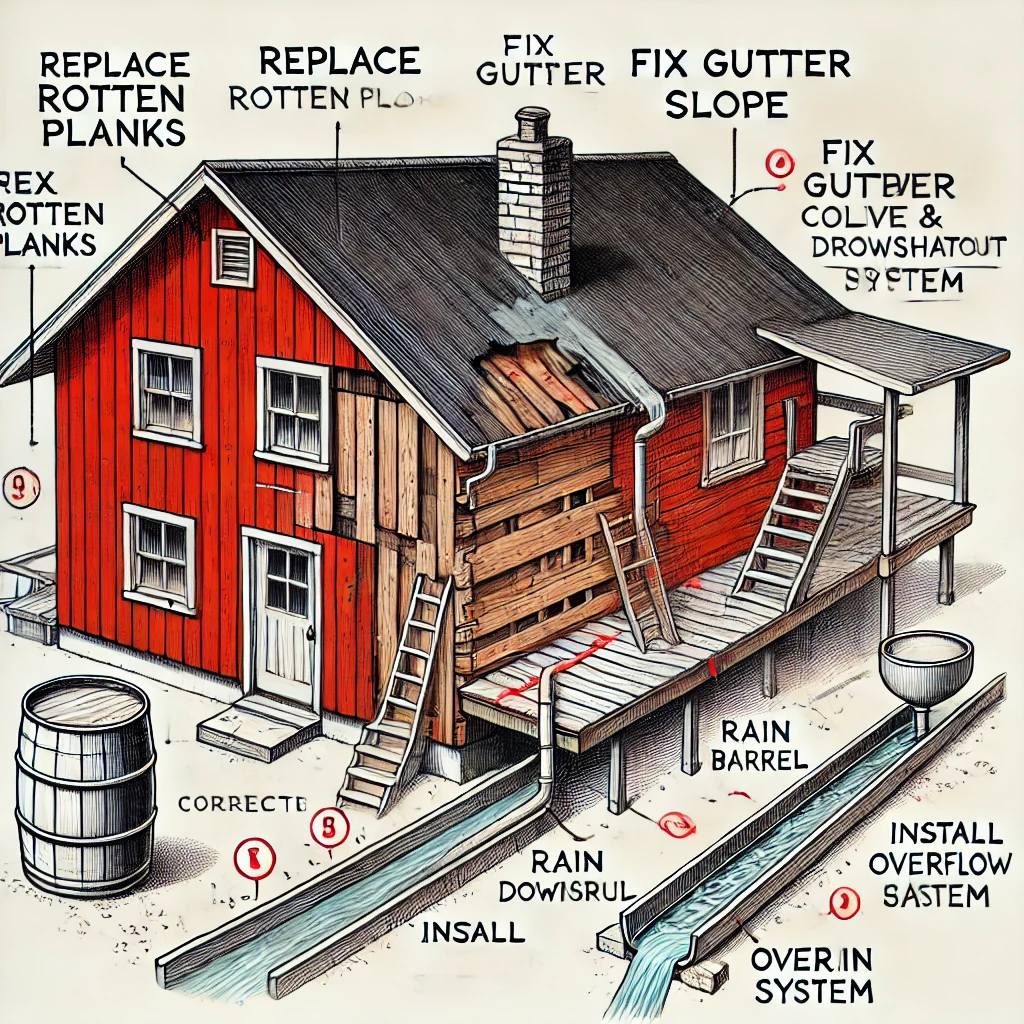

Using AI to Plan Wall Repair and Gutter Installation

In this article, I will share my experience using AI to plan the work required to fix a wall...