Maritime noon report with Google Cloud Dataprep

by bernt & torsten

I recently worked on an exciting project, the use case for the project was to make the maritime noon report data available in Google BigQuery, which sounds simple enough. However, there were a lot of requirements, from collecting application data from the Greenpeace fleet in different formats leveraging Cloud Functions and API calls, landing the data in Cloud Storage, normalizing the data for reporting and translating each other ships’ schemas into one standard schema in BigQuery thanks to Google Cloud Dataprep by Trifacta, and finally automating the end to end process with Cloud Scheduler if you want to learn more read on.

What is a maritime noon report? It’s a report that gives a snapshot of what has happened onboard a ship since the previous noon, i.e., in the last 24 hours. Noon report is used by almost every shipping company today to understand and monitor what is happening on their ships. These reports are traditionally sent by the captain every day, based on data gathered manually by the crew. The content and format of the noon report are usually created by shipping companies and can vary much between different shipping companies.

I will explain how I standardized the Greenpeace International noon report and moved it to the Google Cloud Platform in this how-to. The solution had many components, and I will not go through the details about the Emily application running the Noon report where data is entered daily and the Emily shore server. This how-to focuses on the services used in the Google Cloud Platform (GCP) and how the various GCP services were used.

The GCP Architect Services

Let’s overlook the GCP Architecture for the noon report, as shown in the diagram below. I will go into the GCP specifically throughout this how-to so that you can replicate parts.

Emily shore server

The purpose of the shore server is to collect daily the noon report data from the Greenpeace ships. The mechanism to request the vessel’s data is with a restFul API with a unique API key for authentication.

The API call is made every two hours using crontab on the Emily shore server. The API request is made with a sync date to keep the network bandwidth at a minimum. The sync date is tracked on the shore server, and if there is a timeout or error in the API call, the sync date does not update. And the next time the crontab runs, it takes the same date.

Syncing every two hours compensates for the timezone the ships may be in when a staff member on board a ship enters the noon report data. There is a manual sync dashboard in the Emily application where data can be re-synced from the ships. The sync dashboard looks like this.

Cloud Function

To create a data pipeline from the shore server to Cloud Storage, a Cloud Function was created that has two purposes requesting data from the Shore server through a restFUl API request and storing the result of the API request as a .json unstructured file in Cloud Storage.

Security is a big issue when your create a Cloud Functions, you can read more about Securing HTTP trigger in Google Cloud Functions and Using Secret Manager in a Google Cloud Function

I’m not going into details of the Cloud Function as it is self-explanatory. You can find the cloud function code on GitHub.

The Cloud Function GitHub REPO comes with an .yaml file so you can setup an automatic deployment build process - you can read more how to do in this article Using Cloud Build as you CI/CO for multiple Cloud Functions

Cloud Storage

The cloud functions save the file request by the API call in the Google Cloud Storage in a predefined bucket. The file is replaced daily.

Cloud Scheduler

Using Cloud Scheduler, a frequency is set to trigger the cloud function to run, which then replaces the current file in Cloud Storage. You can manually run the Cloud Scheduler from the Cloud Scheduler dashboard.

Google Dataprep by Trifacta

Google Dataprep by Trifacta was the critical service to make the transition from old tables structure to a single table structure and to migrate historical data from 3 different table structures to a single table structure and take unstructured data and transform it into structured data and store it in a dataset in BigQuery.

SQL vs Normalized

Just a word on the noon report data that Dataprep will turn into normalized data that are easier to use when creating reports. The noon report on the ships and shore server is running in a MySQL environment, which means several tables are used that index each other, the API call to the shore server only returns index data.

A table record could look like this, with indexes pointing to a different table with the value.

| ship | status | location | next_port |

| 2 | 3 | 5 | 6 |

Normalized data shows a record that can be understood, like this.

| ship | status | location | next_port |

| Rainbow Warrior | At Sea | North sea | Amsterdam |

Dataprep by Trifacta

An Extract Transform Load (ETL) tool or similar was required to make the data structured. There are a few choices in the Google Cloud Platform to achieve these transformations. I chose Cloud Dataprep by Trifacta to do the ETL transformation job; for this reason, Cloud Dataprep is a data service for visually exploring, cleaning, and preparing structured and unstructured data for analysis, reporting, and machine learning.

The great thing with Cloud Dataprep is that it’s serverless and works at any scale. There is no infrastructure to deploy or manage. To use Dataprep, you do not need to write code. Dataprep is relatively simple to use, and you can break it down into a few areas:

Flows

A flow is a collection of datasets, recipes, and other objects you use to generate results. You own the flow, and you can share them; you can also export and import flows. You can also schedule a flow to run at a specific time in a particular time zone.

Data Library

The data library is a collection of datasets that you have imported, they could be a BigQuery dataset, a CSV or JSON file stored in Google Cloud Storage, or you imported directly from your computer. In the Cloud Dataprep premium version, you can connect to more options, e.g. Salesforce, MySQL server, and Google Sheet.

Recipe

A recipe is many steps in which you transform your data, and the recipe user interface is very intuitive you get a good view of your data, highlighting data, you get a suggestion on what step you want to take for that specific data column, you can create many steps to build up a recipe.

Run

The run option is when you process your recipe and publish your now structured dataset to BigQuery or Cloud Storage. Dataprep uses Google Cloud Dataflow to run your recipe; instead of building your Dataflow by creating the flow, you can use Dataprep UI to achieve some great transformation when you run it.

Dataprep Story

In the next part of this how-to article, I will explain in a bit more detail how to transform the Noonreport data; there were three steps that I had to go through. The first two steps are only repeated once, and the last step is a continuous process that is repeated daily.

- Merging table structure

- Daily get new noon report data

- Masterlog

Merging table structure

Noonreport format has no standard as to what data is collected, each shipping company has their own. It could also be that each ship has its own table structure depending on the data collected from a ship, and no ship is alike, so in our case, we ended up with a noon report from our ships that had a difference in their table structure.

The first task was to create a common table structure; we created a new table structure and deployed them to the MySQL databases on our ships and our shore server. When the new structure was defined, a data flow was built with many recipes in Cloud Dataprep to merge not only the data from the table structure to the new table structure.

To merge the table structures in Dataprep, I imported the data from the old tables and the table structure each for each table structure; I applied a recipe to do some cleanup of the data, then I created a recipe for merging all the tables into one structure by using the union step in the recipe. The union step looks like this:

The output of this step was one table structure. To complete this step, a Job was run to publish the data in a BigQuery table named merged_noonreport. Merging and standardizing the table structure for the noon report was only done once.

Daily get new noon report data.

The Cloud Scheduler runs every 2 hours and triggers a Cloud Function that reads data from the shore server and stores the .json response file in a Cloud Storage bucket; the .json file is an unstructured dataset. In Cloud Dataprep, a recipe turns unstructured data into structured data.

The Dataprep schedule is set to run once daily, and it will run if it detects a new .json file in the Cloud Storage Bucket. The Dataprep will automatically run the job and publish the file to BigQuery using the dataset name raw_noonreport

The Masterlog

Creating the structured normalized master log data, I created a Dataprep flow that uses two BigQuery tables as input datasets with the names merged_noonreport and raw_noonreport, for which I had previously created two transformation flows in Dataprep.

The noon report transformation is the step to create the structure normalized ships_masterlog dataset, one of the main recipe steps used in this transformation was the lookup recipe step which I used to transform the unstructured data into structured data.

In Dataprep, I created a recipe for each dataset that I was going to use in the main recipe for the lookup step. I did this to limit the number of columns that came from each dataset that was being used for the lookup step. I kept the dataset for the lookup step to two columns; if I had used each dataset’s entire column, the final recipe, I would have to delete all the lookup columns that were not required.

The lookup datasets were each original MySQL table exported from the Emily MySql shore server database as CSV files and stored in Cloud Storage before being imported to Cloud Dataprep.

P.S. In the Dataprep Premium version, you could import your MySQL tables to Google Sheets and use the Google Sheets as the lookup table in your Dataprep flow.

Google BigQuery

BigQuery is the final destination of the transformed data and is the data warehouse for storing datasets used for the transformations with Dataprep. BigQuery also acts as a datamart for structured normalized data, and with noonreport, the structured normalized dataset is named ships_masterlog.

With the structured normalized dataset ships_masterlog, reporting with Data Studio or importing from the dataset to Google Sheets is obtainable. The structured normalized data can also be used in BigQuery to create a machine model of some data elements in the dataset to predict, for instance, fuel consumption under certain weather conditions. That will be something to add to the list for the future.

Below are just the datasets that were created in BigQuery. The raw_noonreport and merged_noonreport are unstructured data that Dataprep uses for the structuring and normalization of the data.

Cost

The datasets are not that large; even running it daily, the free tier covers many of the services that are used, there is a cost for Dataprep that uses Dataflow to process data into BigQuery, and there is a small cost for Cloud Storage storing the files and BigQuery, the total cost is less than 50 Euro a month.

Wrapping Up

That was the step to creating a maritime noon report table in BigQuery using the Dataprep tool. I hope you learned from this and can apply some of these solutions to your Data Project.

If you want to be able to control your Google Cloud Platform cost, this is a great article to read –

Disable Billing for Google Cloud Project when billing exceeds the budget limit

Want to get in contact? The comment box below is the best way to interact with me.

Tech Disillusionment

For four decades, I have worked in the tech industry. I started in the 1980s when computing...

A Poem: The Consultant's Message

On a Friday, cold and gray,

The message came, sharp as steel,

Not from those we...

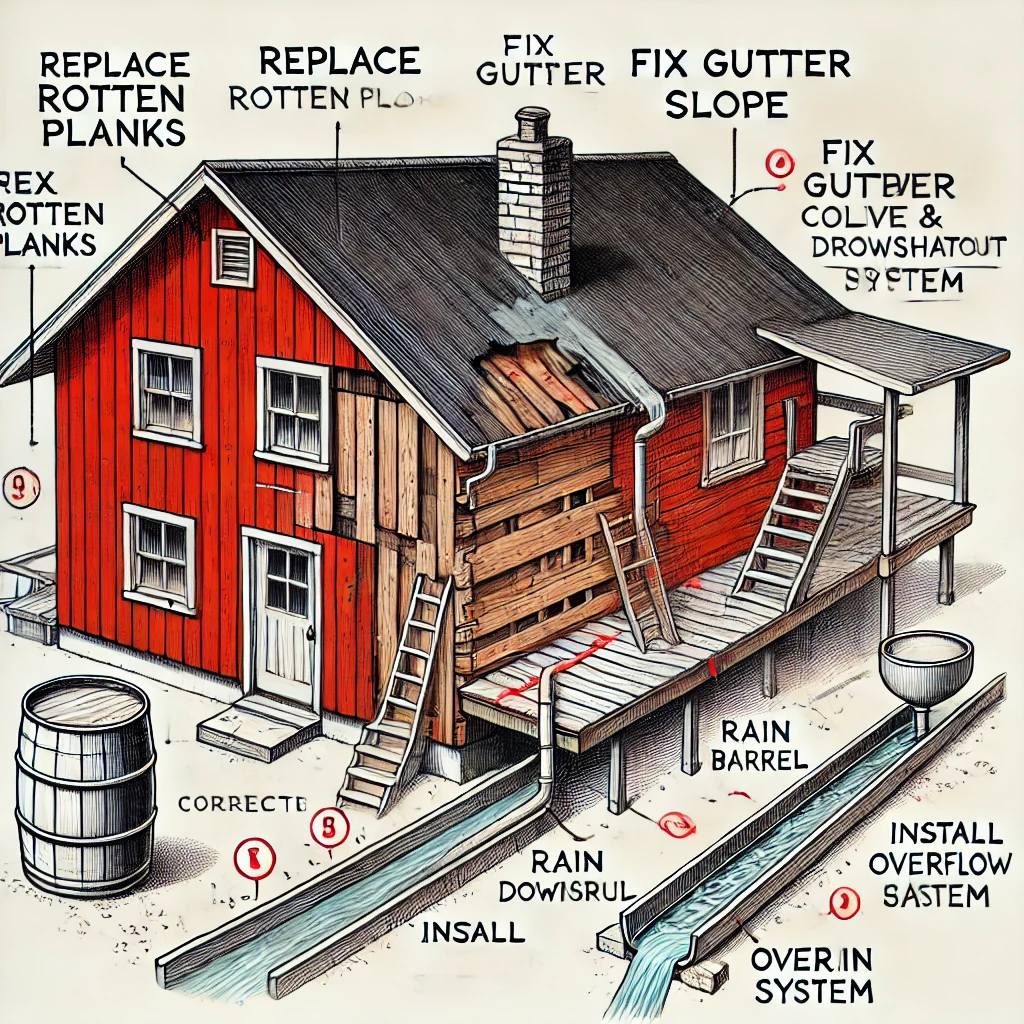

Using AI to Plan Wall Repair and Gutter Installation

In this article, I will share my experience using AI to plan the work required to fix a wall...