The shift from data pipelines to data products

by bernt & torsten

The shift from data pipelines to data products is a major trend in the industry today. Data products are more sophisticated and provide more value than data pipelines. They are the next step in the evolution of data management.

Data consumers such as data analysts and business users are primarily concerned with the production of data assets. On the other hand, data engineers have historically focused on modelling dependencies between tasks (rather than datasets) using orchestration tools.

In the era of big data, we have successfully calculated massive amounts of data, with high-scale computation and storage, and can efficiently query datasets of any size. But as a side effect, data teams are growing rapidly, with new data sources every day, creating a more complex data landscape. While every data project starts out simple, it becomes very complex over time.

The question is no longer how do we transform data overnight or create a DAG with a modern data orchestrator, but how do we get an overview of all processed and stored data. How do we do this conversion?

- Think of data as a product with data-aware pipelines that understand the inner workings of tasks

- Switch to Declarative Data Pipeline Orchestration

- Reuse code across complex cloud environments using abstraction

- Use functional data engineering methods and idempotent functions to make Python a first-class citizen.

One solution to this shift is to focus on data assets and products with great tools by taking the technology layer back and allowing data consumers to access data products. As Data Mesh popularizes data as a product, next we’ll see how this change can be applied to data orchestrators. Think of data as a use case, with a clear owner and custodian for every data product that data consumers want.

In the end, all data has to come from somewhere and go somewhere. A modern data pipeline orchestrator is the layer that connects all these tools, data, practitioners and stakeholders.

Achieving the transition from pipelines to business logic-centric product views of data is a challenging technical problem that requires ingesting data from dozens of external data sources, SaaS applications, APIs, and operating systems. to give an example of a data product, I recently build a pipeline YouTube Analytics to BigQuery with Airbyte. The Airbyte product has all the connectors that I needed to ease the hard parts of E(xtract) and L(oad) and change data collection (CDC).

Data products do not have to reside in an orchestration tool. The orchestrator only manages dependencies and business logic. These assets are primarily spreadsheets, files, and dashboards that reside somewhere in a data warehouse, data lake, or BI tool.

Tech Disillusionment

For four decades, I have worked in the tech industry. I started in the 1980s when computing...

A Poem: The Consultant's Message

On a Friday, cold and gray,

The message came, sharp as steel,

Not from those we...

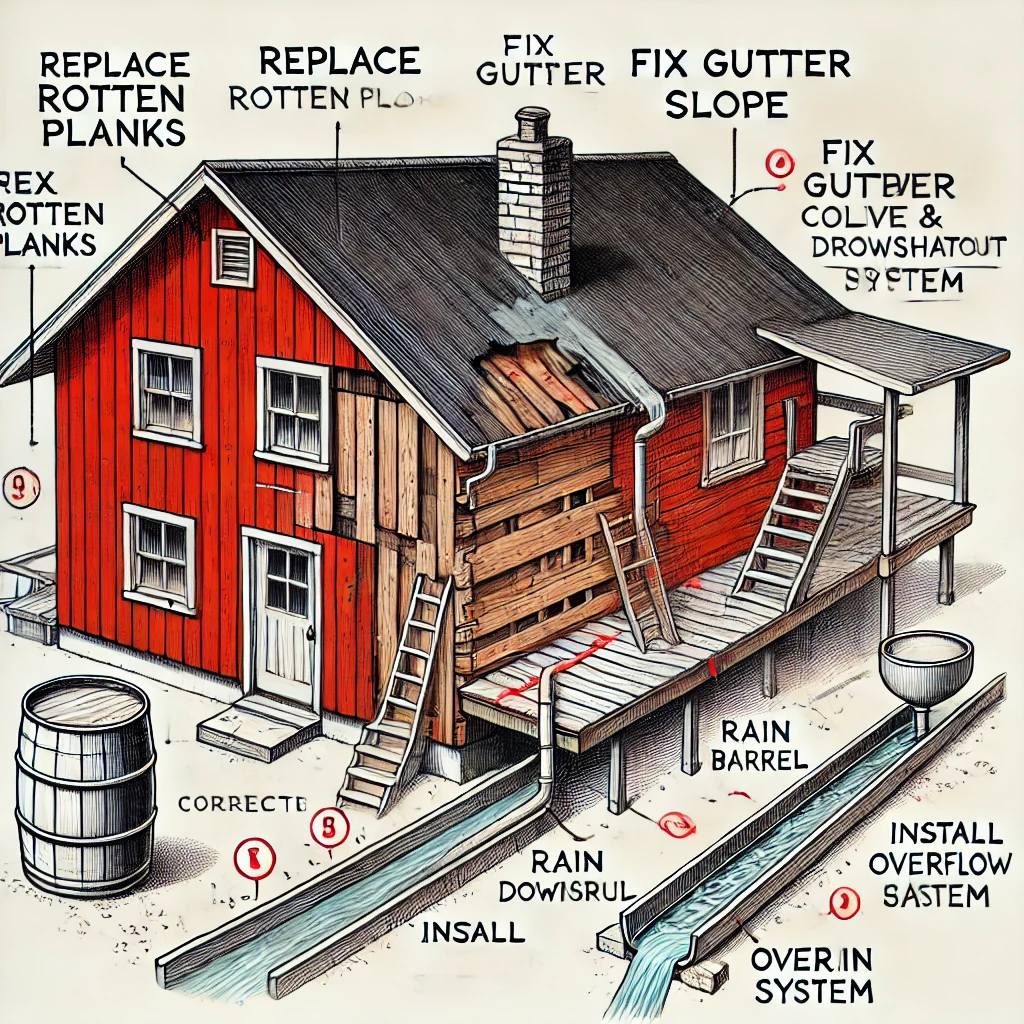

Using AI to Plan Wall Repair and Gutter Installation

In this article, I will share my experience using AI to plan the work required to fix a wall...