How to trouble shoot a slow Linux server

by bernt & torsten

Are you find your server is running slowly, how to troubleshot to find out what is causing the problem. Lets start looking at the server, you need to login to your server to run the command top

root@bash:/# top

As you can see from the image the load average is very good – say of your load average would be something like this: 8.36, 8.27, 8.33

That is way too high – normal is under 1 – each column in the load average represents one, five and fifteen-minute periods – A load number of 0 means an idle server. Each process using or waiting for CPU run queue increments the load number by 1.

The next view tells a bit more about the CPU-bound load

%Cpu(s): 98.5 us, 1.2 sy, 0.0 ni, 0.0 id, 0.0 wa, 0.0 hi, 0.3 si, 0.0 st

- us stands for User CPU time

- sy for system CPU time

- id is CPU idle time

- wa is CPU wait time

So we can see that the issue is using CPU time – when you have a high user CPU time, it’s due to a process run by a user on the system, such as Apache, MySQL, someone trying to break in or maybe a shell script.

If your process queue looks similar to this:

23441 apache 20 0 672580 125652 37292 R 24.0 2.1 133:38.60 httpd

24876 apache 20 0 573028 96392 33076 R 24.0 1.6 129:57.04 httpd

27342 apache 20 0 685680 127304 37260 R 24.0 2.1 124:16.81 httpd

27384 apache 20 0 573648 98356 34080 R 24.0 1.7 126:58.46 httpd

23451 apache 20 0 685704 129500 40228 R 23.3 2.2 126:47.23 httpd

Apache has forked many client connections and taking up a lot of CPU time, this can be due to high traffic or it could be a Denial of Service Attack(DDoS) that makes Apache to fork process to handle each connected client.

Run out of available RAM

The next cause for the high load is a system that has run out of available RAM and has started to go into swap. Because swap space is usually on a hard drive that is much slower than RAM, when you use up available RAM and go into swap, each process slows down dramatically as the disk gets used.

What’s tricky about swap issues is that because they hit the disk so hard, it’s easy to misdiagnose them as I/O-bound load. After all, if your disk is being used as RAM, any processes that actually want to access files on the disk are going to have to wait in line. So, if I see high I/O wait in the CPU row at the top, I check RAM next and rule it out before I troubleshoot any other I/O issues.

Amount of RAM

To diagnose out of memory issues, the first place I look is the next couple of lines in the top output:

Mem: 1020076k total, 998000k used, 27000k free, 85520k buffers Swap: 1004052k total, 4360k used, 999692k free, 280000k cached

These lines tell you the total amount of RAM and swap along with how much is used and free; however, look carefully, as these numbers can be misleading. To get an accurate amount of free RAM, you need to combine the values from the free column with the cached column. In this example, it would be 27000k + 280000k, or over 300Mb of free RAM. In this case, the system is not experiencing an out of RAM issue. Of course, even a system that has very little free RAM may not have gone into swap. That’s why you also must check the Swap: line and see if a high proportion of your swap is being used.

If you do find you are low on free RAM, go back to the same process output from the top, only this time, look in the %MEM column. By default, the top will sort by the %CPU column, so simply type M and it will re-sort to show you which processes are using the highest percentage of RAM.

iostat

I/O-bound load can be tricky to track down sometimes if your system is swapping, it can make the load appear to be I/O-bound. Once you rule out swapping, and you do have a high I/O wait, the next step is to attempt to track down which disk and partition are getting the bulk of the I/O traffic. To do this, you need a tool like iostat.

The iostat tool provides a good overall view of your disk I/O statistics:

Like with top, iostat gives you the CPU percentage output. Below that, it provides a breakdown of each drive and partition on your system and statistics for each:

- tps: transactions per second.

- Blk_read/s: blocks read per second.

- Blk_wrtn/s: blocks written per second.

- Blk_read: total blocks read.

- Blk_wrtn: total blocks written.

I/O traffic

By looking at these different values and comparing them to each other, ideally, you will be able to find out first, which partition (or partitions) is getting the bulk of the I/O traffic, and second, whether the majority of that traffic is reading (Blk_read/s) or writes (Blk_wrtn/s). As I said, tracking down the cause of I/O issues can be tricky, but hopefully, those values will help you isolate what processes might be causing the load.

For instance, if you have an I/O-bound load and you suspect that your remote backup job might be the culprit, compare the read and write statistics. Because you know that a remote backup job is primarily going to read from your disk if you see that the majority of the disk I/O writes, you reasonably can assume it’s not from the backup job. If, on the other hand, you do see a heavy amount of read I/O on a particular partition, you might run the lsof command and grep for that backup process and see whether it does in fact have some open file handles on that partition.

Bad traffic

If the traffic is real and the connected client increase this is a good thing, if it is bad traffic then there are countermeasures to put in place to speed up the server.

- In case of DDoS attack, install Apache module mod_evasive – this should be installed by default as it can be used for preventing DDoS attacks.

- Optimize your application: profile your application’s code and try and optimize the areas that place the most load on the server.

- PHP could be the culprit – caching PHP would be a good option by adding Memcached or eAccelerator

- Consider changing to a lower impact web server, such as nginx. This could increase the number of connections that the server can handle.

- Add page caching, using something like varnish

- Add another server, and load balance between them

- Increase the capabilities of the existing server (e.g. increase RAM, upgrade the processor, install a faster hard disk).

All of these options have a cost, in time or money. It has to be done, the quickest wins, in my opinion, would probably be options 1, 2 and 3. Option 4 and 5 means downtime while they’re being installed.

Conclusion

Here are some useful articles to read:

Understanding Linux CPU Load – when should you be worried?

How to install mod_security and mod_evasive on an Ubuntu 14.04

How to Protect Against DoS and DDoS with mod_evasive for Apache on CentOS 7

Tech Disillusionment

For four decades, I have worked in the tech industry. I started in the 1980s when computing...

A Poem: The Consultant's Message

On a Friday, cold and gray,

The message came, sharp as steel,

Not from those we...

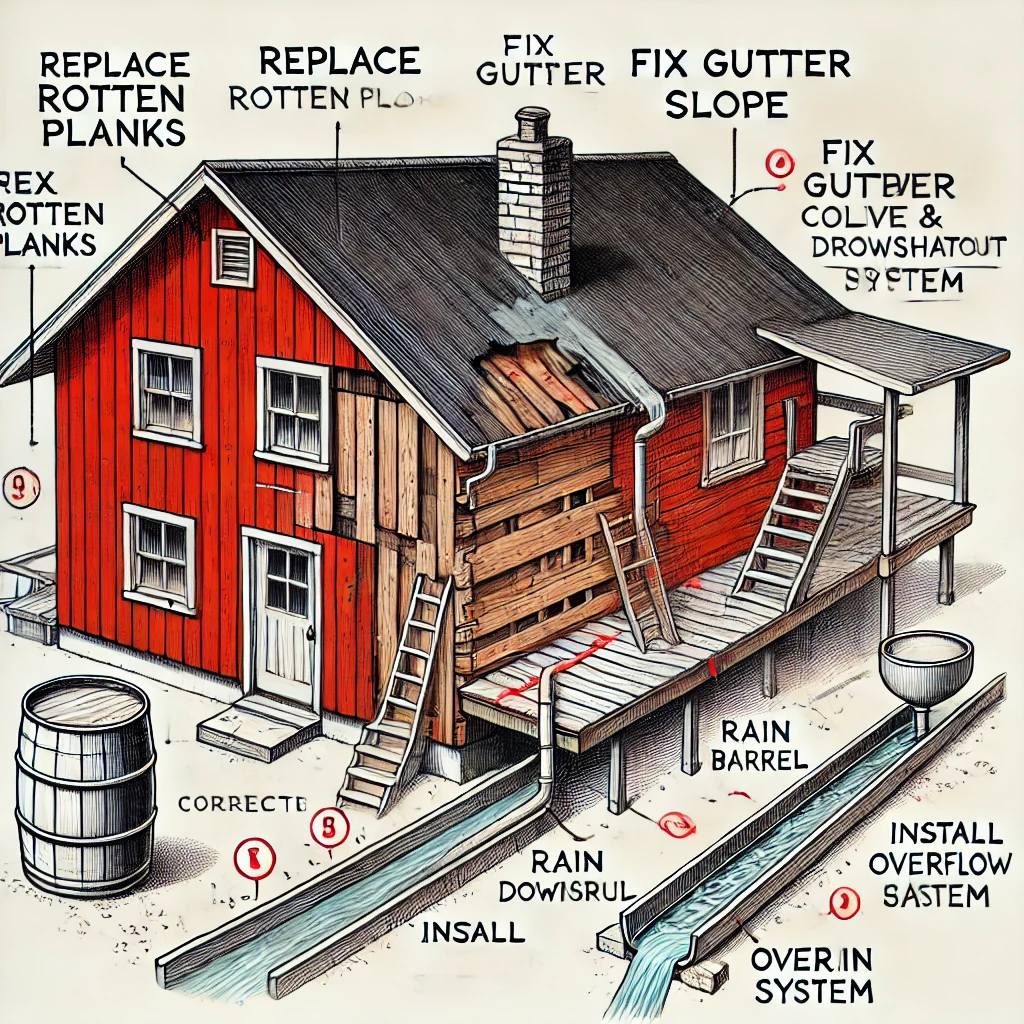

Using AI to Plan Wall Repair and Gutter Installation

In this article, I will share my experience using AI to plan the work required to fix a wall...