Expanding the Horizons of LLMs with Retrieval Augmented Generation and Vector Indexing

by bernt & torsten

Our previous explorations delved into the fascinating world of large language models (LLMs), uncovering their remarkable ability to process, understand, and generate human language. We also examined the various approaches to implementing LLMs, empowering you with the knowledge to harness their power in your applications. Now, we embark on a journey to explore Retrieval Augmented Generation (RAG), a technique that extends the capabilities of LLMs, enabling them to become even more powerful and versatile tools.

Empowering LLMs with External Knowledge

While incredibly capable, LLMs are limited by the information they have been trained on. This means they may not be aware of the latest happenings in the world or possess knowledge from specific domains or expertise. Retrieval Augmented Generation (RAG) addresses this limitation by augmenting the LLM’s capabilities with information retrieved from external knowledge bases.

When a user submits a query in RAG, the system first consults with retrieval tools, such as a website or vector databases like Chroma, Pinecone or Weaviate. These retrievers aim to obtain relevant context from the knowledge base, providing the LLM with a broader and more up-to-date understanding of the topic.

The retrieved information is then combined with the user’s query and additional context prompts, forming an enhanced prompt that is sent to the LLM. This enriched prompt enables the LLM to generate more comprehensive, informative, and accurate responses, even for topics or questions that fall outside its initial training data.

What is RAG?

RAG is a technique that helps AI applications generate more accurate and reliable responses by providing them with up-to-date or context-specific data from external sources. This is like giving an AI a cheat sheet to help them answer your questions better.

Benefits of RAG:

- Dynamic Data Access: RAG allows AI to access real-time information, so they can always give you the most up-to-date answers.

- Mitigated Inaccuracies: RAG reduces the risk of AI making mistakes by giving them access to accurate data when needed.

- Enhanced Transparency: RAG makes tracking where AI gets its information easier, so you can be sure that their answers are reliable.

- Cost-Efficiency: RAG is a cost-effective way to improve the performance of AI applications.

Organizing Knowledge for Efficient Retrieval

Vector indexing plays a crucial role in RAG by efficiently organizing and retrieving information from knowledge bases. In vector indexing, text is transformed into numerical representations known as vectors. These vectors allow the system to quickly identify and retrieve relevant documents or information based on their semantic similarity to the user’s query.

Vector indexes are handy for dealing with large and complex knowledge bases, enabling the system to efficiently sift through vast amounts of data to find the most relevant information for the user’s query.

Harnessing the Power of Vector Databases for Efficient LLM Operations

Vector databases play a pivotal role in optimizing the performance and efficiency of LLMs. These specialized databases are specifically designed to store and manage high-dimensional vectors, the numerical representations of text and other data used by LLMs.

Compared to traditional databases that store text in its raw form, vector databases offer several advantages for LLM applications:

- Efficient Search and Retrieval: Vector databases provide efficient algorithms for searching and retrieving similar vectors, enabling LLMs to quickly identify relevant information from vast repositories of data.

- Semantic Similarity: Vector databases can capture the semantic and contextual meaning of text, allowing LLMs to understand the nuances of language and find information that is conceptually similar to the user’s query.

- Scalability: Vector databases are designed to handle large volumes of data, making them well-suited for real-time applications and large-scale LLM deployments.

The process of converting text to vector representations, known as applying embeddings, is crucial for utilizing vector databases with LLMs. Embeddings capture the semantic and contextual information of text, transforming it into a numerical format that can be efficiently processed and analyzed by LLMs.

Semantic search, a powerful capability enabled by vector databases, allows LLMs to retrieve information based on its meaning and context rather than just matching exact keywords. This enables LLMs to provide more relevant and comprehensive responses to user queries, even those that are open-ended or ambiguous.

Vector databases have emerged as essential tools for enhancing the performance and capabilities of LLMs. Their ability to efficiently store, manage, and search vectorized data makes them indispensable for developing intelligent conversational systems, knowledge bases, and other LLM-powered applications.

Agents and Assistants

RAG-enhanced chatbots, often referred to as agents or assistants, represent a significant leap forward in chatbot technology. These intelligent conversational interfaces can provide users with more comprehensive, informative, and accurate responses, even for complex or open-ended questions.

RAG agents can access and process information from a variety of sources, including knowledge bases, APIs, and even real-time data streams. This allows them to provide users with up-to-date information on a wide range of topics, making them invaluable tools for research, education, customer service, and personal assistance.

Open-Source Platforms

Open-source platforms like LangChain and LlamaIndex have simplified the implementation of RAG-enhanced chatbots. These platforms provide a suite of tools that streamline the orchestration layer and integration with LLMs, making it easier for developers to create and deploy powerful RAG-based chatbots.

These platforms handle the complexities of retrieval, prompt generation, and LLM integration, allowing developers to focus on designing and customizing the user interface and conversation flow. This has democratized the development of RAG-based chatbots, making them accessible to a wider range of users and applications.

The Future of LLMs with RAG and Vector Indexing

Retrieval Augmented Generation (RAG) and vector indexing mark a significant step forward in the evolution of LLMs, expanding their capabilities and enabling them to become even more powerful and versatile tools. As these technologies continue to develop, we can expect to see even more innovative applications emerge, transforming the way we interact with technology and access information.

Tech Disillusionment

For four decades, I have worked in the tech industry. I started in the 1980s when computing...

A Poem: The Consultant's Message

On a Friday, cold and gray,

The message came, sharp as steel,

Not from those we...

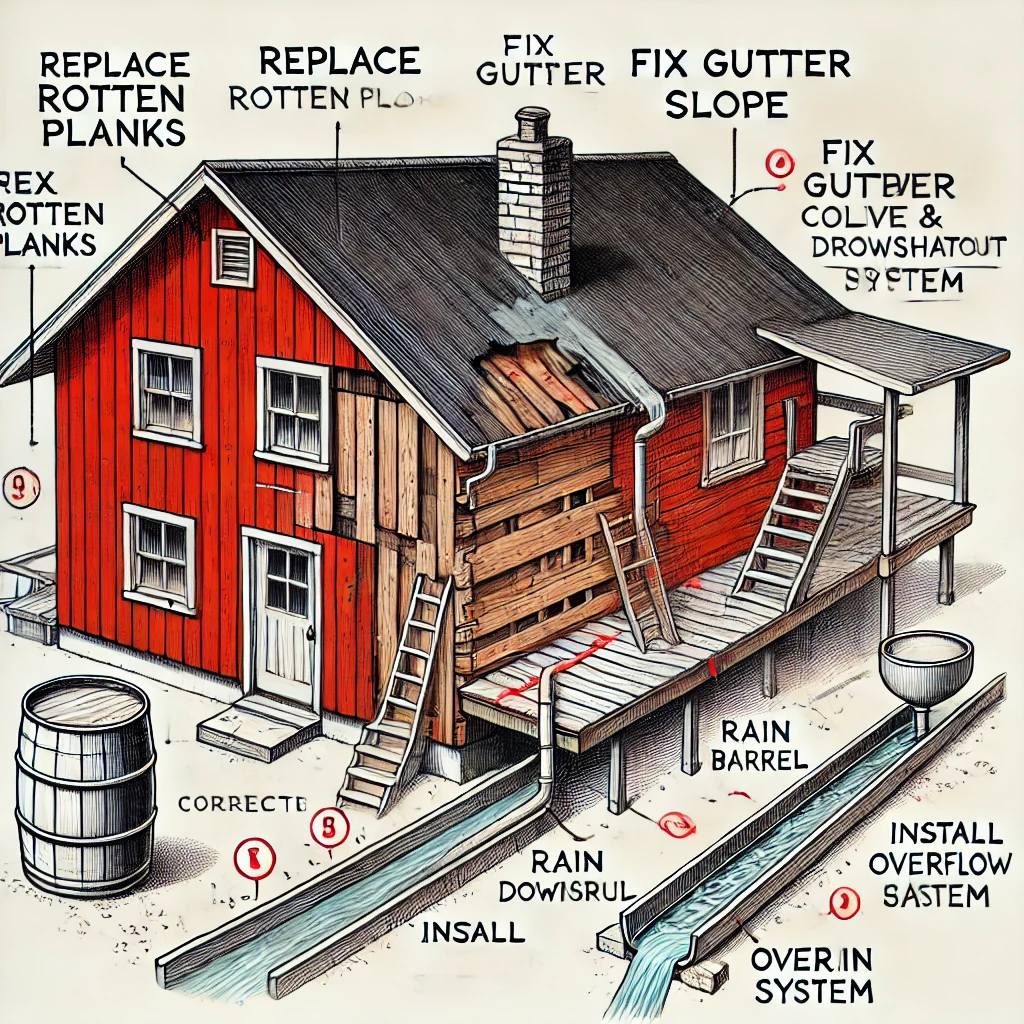

Using AI to Plan Wall Repair and Gutter Installation

In this article, I will share my experience using AI to plan the work required to fix a wall...