#datapipeline: Deploy Serverless Data pipeline to GCP with Terraform

by bernt & torsten

I recently built a data pipeline which I wrote up in an article data pipeline the hourly electricity rate, what I did not explain was how I deployed it to the Google Cloud Platform. In this article, I will show you how I used Terraform to deploy it to the Google Cloud Platform.

The biggest communication problem is we don’t listen to understand we listen to respond

Terraform is an open-source infrastructure as a code software tool that provides a consistent CLI workflow to manage hundreds of cloud services.

This post provides an overview of the Terraform resources required to configure the infrastructure for creating the Electricity Data Pipeline on Google Cloud Platform (GCP) as illustrated in the diagram below.

Terraform Resources

In order to define the above infrastructure in Terraform, we need the following Terraform resources:

Define the Provider

I am using Google I set my provider to Google and also define the GCP project name

provider "google" {

project = "<project id>"

region = "us-central1"

}Define a bucket

Next, I define the bucket to store the source code for the Cloud Function

resource "google_storage_bucket" "bucket" {

name = "swedish-electricty-prices" # This bucket name must be unique

location = "us"

}Define Archive File

On my local machine, I define where my src is and where to store the src.zip file – Directory where your Python source code is

data "archive_file" "src" {

type = "zip"

source_dir = "${path.root}/../src" #

output_path = "${path.root}/../generated/src.zip"

}resource "google_storage_bucket_object" "archive" {

name = "${data.archive_file.src.output_md5}.zip"

bucket = google_storage_bucket.bucket.name

source = "${path.root}/../generated/src.zip"

}Define the Cloud Function

I define the Cloud Function resource, what region, what memory, what type of runtime and the entry point of the code. And from where to deploy the code to the cloud function.

resource "google_cloudfunctions_function" "function" {

name = "swedish-electricty-prices"

description = "Trigger for Swedish Electricity Prices"

runtime = "python37"

environment_variables = {

PROJECT_NAME = "<Project Name",

}

available_memory_mb = 256

timeout = 360

source_archive_bucket = google_storage_bucket.bucket.name

source_archive_object = google_storage_bucket_object.archive.name

trigger_http = true

entry_point = "main" # This is the name of the function that will be executed in your Python code

}Define Service Account

I need to define a service account that can be accessed by the various GCP service automatically without my intervention.

resource "google_service_account" "service_account" {

account_id = "swedish-electricty-prices"

display_name = "Cloud Function Swedish Electricty Prices Invoker Service Account"

}Set IAM Role

For the Cloud Scheduler to be able to invoke a Cloud Function, I need to set a few IAM roles.

resource "google_cloudfunctions_function_iam_member" "invoker" {

project = google_cloudfunctions_function.function.project

region = google_cloudfunctions_function.function.region

cloud_function = google_cloudfunctions_function.function.name

role = "roles/cloudfunctions.invoker"

member = "serviceAccount:${google_service_account.service_account.email}"

}Define the Cloud Scheduler

To wake up the Cloud Function I need to be able to create a scheduled job, I do that by using the Cloud Scheduler and in terraform define the details of setting a Cloud Schedule job.

resource "google_cloud_scheduler_job" "job" {

name = "swedish-electricty-prices"

description = "Trigger the ${google_cloudfunctions_function.function.name} Cloud Function Daily at 6:00."

schedule = "10 0 * * *" # Daily at 12:10

time_zone = "Europe/Stockholm"

attempt_deadline = "320s"

http_target {

http_method = "GET"

uri = google_cloudfunctions_function.function.https_trigger_url

oidc_token {

service_account_email = google_service_account.service_account.email

}

}

}There are many different ways to deploy a data pipeline using a flexible solution that makes full use of the capabilities of Terraform while creating a low-cost, scalable infrastructure. If you have any questions or ideas on how to improve this approach, you can make a comment below.

Tech Disillusionment

For four decades, I have worked in the tech industry. I started in the 1980s when computing...

A Poem: The Consultant's Message

On a Friday, cold and gray,

The message came, sharp as steel,

Not from those we...

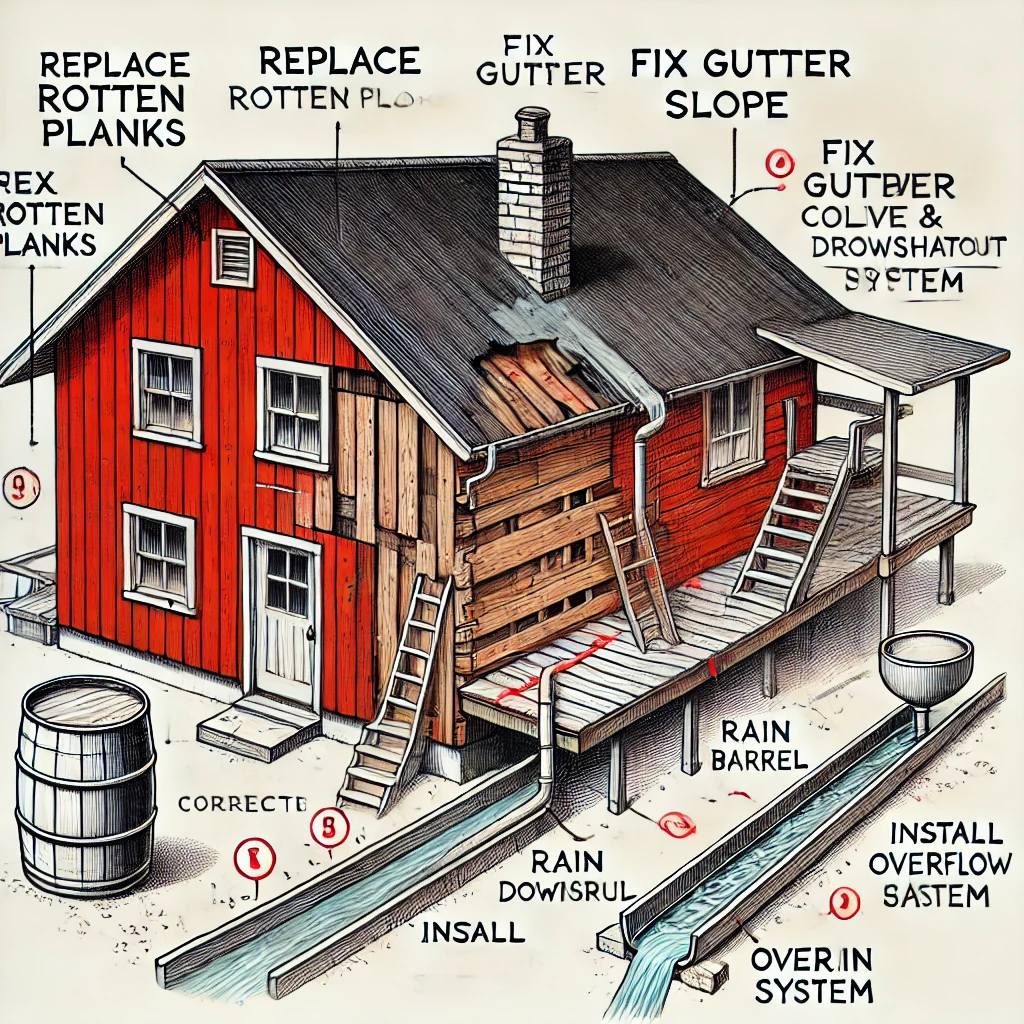

Using AI to Plan Wall Repair and Gutter Installation

In this article, I will share my experience using AI to plan the work required to fix a wall...