AI-Powered Comprehensive Custom Data Insight LLM Implementation Guide

by bernt & torsten

Welcome to the “AI-Powered Custom Data Insights: Comprehensive LLM Implementation Guide.” This guide is a comprehensive template for organizations and professionals seeking to harness the power of Large Language Models (LLMs) to unlock valuable insights from their data.

Introduction to LLM Technology

Large Language Models (LLMs) have revolutionized how we interact with data. They offer a versatile and customizable approach to understanding and extracting valuable information from text-based datasets. Unlike conventional search methods, LLMs empower users to ask questions in their own words, obtaining precise answers tailored to their needs.

Why Use This Template?

This template is designed to help you seamlessly integrate LLM technology into your projects. Whether you’re in finance, manufacturing, telecom, or any field requiring in-depth data analysis, this guide provides a structured roadmap for leveraging LLMs effectively.

By following this template, you’ll be well-equipped to embark on your journey to harness the full potential of LLMs, ensuring that your data becomes a valuable asset for informed decision-making.

Leveraging LLM AI for Customized Data Insights: A Comprehensive Product Strategy

Benefits of Implementing LLM AI for Your Data:

1. Customization for Your Data

- LLM models offer the flexibility to fine-tune your specific dataset, ensuring optimized performance tailored to your unique requirements.

- Pre-trained models cannot constrain you, but you can mould the AI to serve your business needs.

2. Tailored Question-Answering

- Unlike traditional search methods relying on predefined queries, LLM models allow for highly customized responses.

- Users can phrase questions in a way that makes sense, obtaining precise answers aligned with their objectives.

3. Easy Implementation

- LLM models can be seamlessly integrated into projects thanks to readily available libraries and straightforward code.

- Minimal learning curve ensures quick realization of benefits.

4. Rapid Information Retrieval

- LLM models excel at swiftly extracting targeted information from extensive documents or datasets.

- Invaluable for tasks requiring rapid access to specific details such as regulatory compliance checks, financial audits, or research endeavors.

5. Versatility Across Domains

- LLM models find applications across various industries, including finance, manufacturing, and telecom.

- Their capability for detailed information extraction makes them a powerful asset in diverse fields.

Overview of the Project:

Part 1: Data Preparation for QnA

- Data Load: Begin by acquiring the necessary data for question-answering, extracting information from web pages or PDF documents.

- Data Split: Divide the data into manageable chunks to ensure efficient processing.

- Generate Embeddings: Transform text into numerical representations (embeddings) for analysis and store them securely.

- Vector Storage: Efficiently store the embeddings in a vector store for quick retrieval during question-answering.

Part 2: Question-Answering (QnA)

- Question Embeddings: Generate embeddings for user questions to prepare them for comparison with stored data.

- Retrieve Similar Chunks: Utilize vector storage’s vector search capabilities to retrieve relevant data chunks prepared in Part 1.

- Prompt Context Creation: Combine retrieved data chunks with the user’s question to form a context for query formulation.

- Tailored Answer Generation: Leverage LLM models to generate precise, tailored answers based on the provided context and dataset.

Your LLM App Architecture Overview:

Read about an ultimate tech stack that uses Google Cloud Platform

Your Simplest and Most Comprehensive Implementation Guide:

Data Preparation:

- The

data_prep()Function: This function simplifies data preparation for question-answering by performing the following tasks:- Load data from a specified URL and extract text content using BeautifulSoup.

- Remove unwanted elements like superscripts, spans, tables, lists, etc., from the content.

- Split the content into smaller, manageable chunks using a text splitter.

- Calculate embeddings for each chunk using the

get_embedding()function. - Store the chunks and their embeddings in a Firestore collection named “mifid2”.

You can find more details about the code – GitHub repository.

This refined product plan should provide a more transparent and organized overview of your LLM AI-based product strategy for executives and stakeholders.

Tech Disillusionment

For four decades, I have worked in the tech industry. I started in the 1980s when computing...

A Poem: The Consultant's Message

On a Friday, cold and gray,

The message came, sharp as steel,

Not from those we...

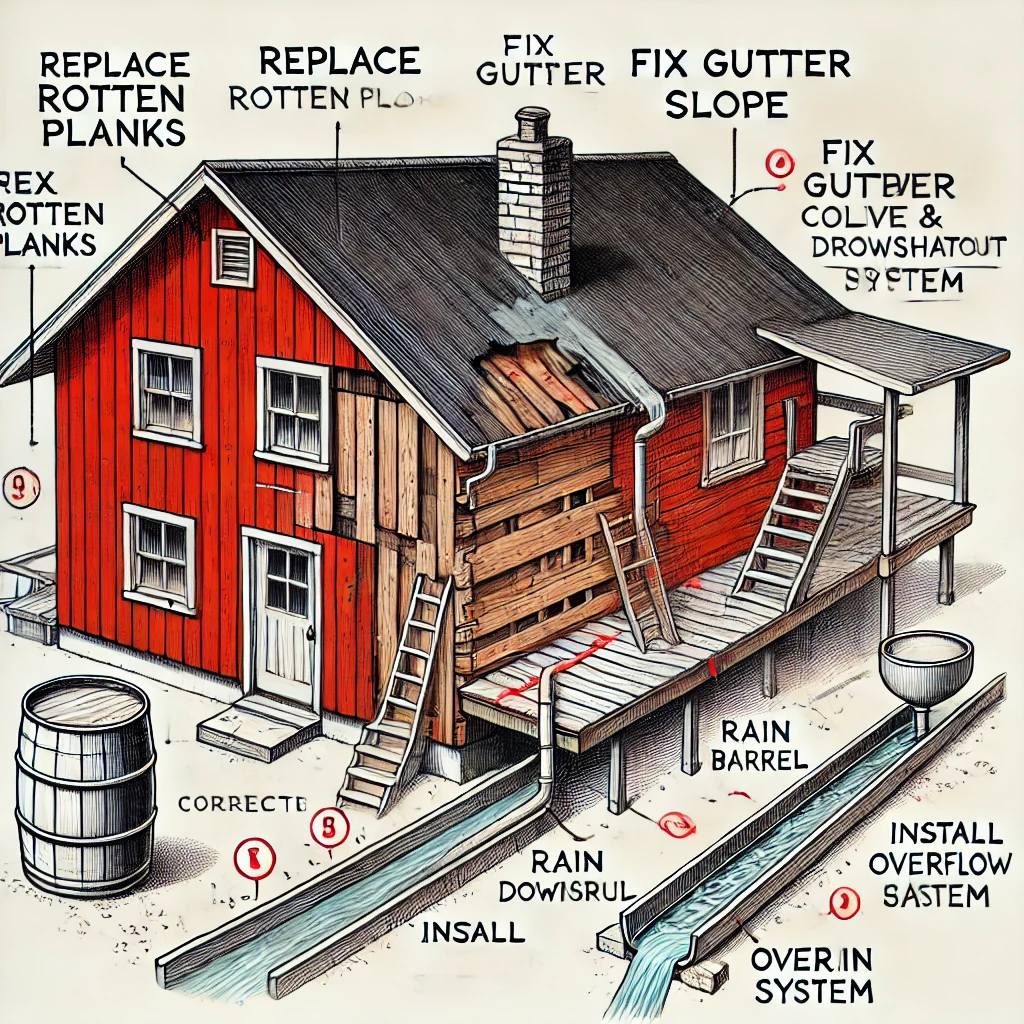

Using AI to Plan Wall Repair and Gutter Installation

In this article, I will share my experience using AI to plan the work required to fix a wall...